Confirmation Bias

Confirmation bias is the tendency to notice, favour, and remember information that supports what we already believe, while overlooking or discounting what does not

We don’t just form opinions; we construct entire cases for them.

What it is – and why it’s useful

Once an idea is formed, our mind quickly weighs new evidence against that belief, keeping what fits and pushing aside everything that doesn’t.

The concept is closely associated with the work of Peter Wason, whose experiments in the 1960s showed how strongly people prefer information that confirms their existing ideas. Later, psychologists Daniel Kahneman and Amos Tversky broadened our understanding of how these mental shortcuts shape human thinking.

The more general point is that human thinking is not as objective as we like to imagine. We rely on mental shortcuts that help us make sense of the world quickly, even if that sometimes comes at the cost of the truth.

In practice, confirmation bias manifests in a simple yet powerful pattern.

We are more likely to:

- notice information that supports our existing view

- trust it more when we see it

- remember it more clearly

- overlook, dismiss, or explain away anything that contradicts it

This is not because we are trying to deceive ourselves. It is because the brain prefers consistency. It likes patterns and order. Once a belief is in place, it becomes a kind of filter, shaping what we see and how we interpret it.

Consistency keeps us from procrastinating over decisions (we’ve already done that thinking), but risks distorting reality.

Real-life examples

If your first impression of someone is that they are warm, rude, competent, jobsworth, or judgemental, confirmation bias means you are likely to interpret their later behaviour through that lens.

I once decided a woman on the school run was rude because she did not return my ‘good morning’. I kept that opinion for weeks. Later that term, we both helped at a school event, and I found out she was a) deaf, and b) delightful and funny.

I had built a whole story out of almost no information, and the little bit I did have was false. I was utterly mortified. (To be fair, she thought it was hilarious when I came clean.)

At work, you might decide that someone is bitchy. From that moment on, your attention laser is trained on anything that proves you right.

No ‘best wishes’ at the bottom of their email – just her name.

Noticing them speaking quietly to a colleague over lunch (what are they planning?)

A witty comment that everyone laughs at.

Each becomes a piece of evidence. Meanwhile, the rest of the time, they are helpful, cooperative, efficient, funny or unremarkable. But that tends to go unnoticed as you build an impressive internal case for the prosecution.

What a bitch.

Our self-image gets the confirmation bias treatment, too.

If you decide you are undisciplined, every digestive biscuit or missed workout reinforces that idea. Each time you actually do get to the gym, you dismiss it as a one-off or a fluke. The ‘undisciplined’ identity remains intact, supported by all your carefully selected evidence.

What you believe about yourself shapes what you notice about your behaviour, which in turn strengthens the belief. And so on.

In relationships, if you believe someone does not care about you, you focus your attention on what they don’t do.

An hour to reply to your text.

No romantic flowers like your friend gets from her partner.

Your own bin-takings-out going unthanked.

At the same time, the effort they do make is the least they could do. The story you construct becomes self-reinforcing.

The more you look for signs that they do not care, the more you find them.

Health and lifestyle choices are another good example. Once someone commits to a new regime – a diet, a training method, or a well-being hack- they tend to seek out voices and evidence that support it. Articles, groups, anecdotes, and professional opinions which reinforce their view feel convincing. They question, minimise, or ignore evidence that is uncomfortable or contradictory.

Over time, it can feel as though the evidence is overwhelmingly in favour of their approach, when in reality, it has just been filtered that way.

Our ‘right’ bubble is very comfortable; we use it to shelter from the whole truth, and we instinctively keep it like that.

We are also, almost without exception, the hero in our own story.

Once we see ourselves as reasonable, well-intentioned, hard done by, or doing our best, we start collecting evidence to support that version of events. The same goes if we think we are a useless waste of space. Our story stays intact, not because it is true, but because we keep feeding it the right material.

Why it matters

Because our thoughts influence our actions, confirmation bias can make a huge impact on the choices we make. It can keep us stuck in out-of-date patterns that are no longer useful. It can reinforce identities that limit our growth.

It can lead to bad decisions that feel absolutely justified.

It is also hugely relevant in conflict.

Two people can look at the same situation and come away with entirely different conclusions, each sure they are right. The problem is that they are using different pieces of information and interpreting them through different filters.

Each of them is building a case, but they are not using the same evidence.

Debate has traditionally been a respected discipline.

In the current landscape, disagreement can often be seen as adversarial or argumentative. Part of that shift may be cultural, and part may be structural.

Whether it is politics, religion, or sport, we instinctively seek out the voices that already agree with us, and can see the other side as the enemy.

Collective opinions have become personalised online, and are increasingly filtered by what we have already engaged with. The more we click, like, and agree, the more of the same we are shown. Over time, it becomes harder to even encounter opposing views naturally and easier to assume that our perspective is the obvious one.

When we do, by chance, see something opposed to our view, we are so unused to it that it can seem outrageous. What was once a difference of opinion can feel like a personal attack or a moral failing.

When two people’s worldviews are far enough apart, the gap itself can feel threatening – not just uncomfortable, but wrong in a way that seems to demand some kind of explanation. I expect you have seen this play out publicly: someone expresses a view that sits outside another group’s accepted thinking, and the reaction is not debate but outright condemnation. No polite: “You, sir – I must disagree with you, in the strongest terms”, but “You cannot say that.”

The mismatch between belief systems, when extreme enough, can end careers, friendships, and reputations – not because the person was dishonest, but because their map of the world just didn’t look anything like someone else’s.

And yet, for all the damage it can do, confirmation bias is not entirely negative.

Finding people with similar views can boost our confidence and help us stay consistent in our actions. It lets us move forward through life without constantly re-examining every belief we hold.

The trouble starts when it goes unchecked. If the evidence you collect is biased, you can be 100% certain of a conclusion you’ve come to, whilst simultaneously being 100% wrong about it.

The principle running through all of this is that certainty feels lovely, as does being accurate. The two are not always the same thing.

We go Googling like a barrister, building a case, rather than like a scientist rigorously testing one.

Try this today

Take something you currently believe about yourself, another person, or a situation, and ask a few uncomfortable questions:

- What would prove this wrong?

- What evidence have I overlooked?

- What would someone who disagrees with me point out?

The aim is not to abandon your view, but to examine it more honestly. If you were building a case for the opposition, what would it be?

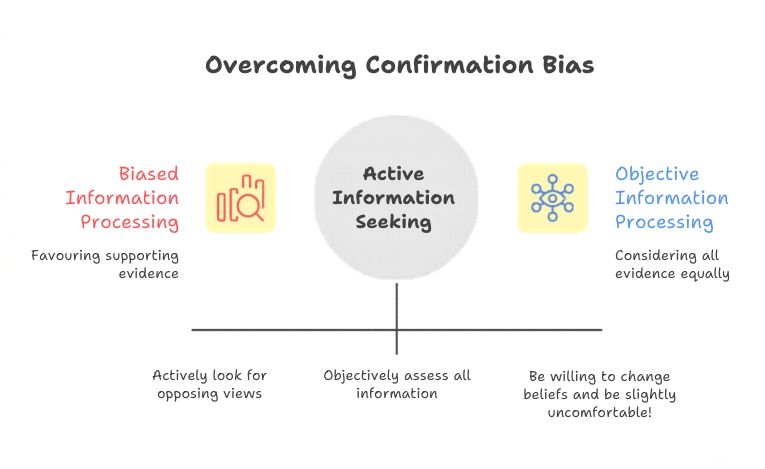

It can also be useful to actively look for evidence that does not fit.

As well as gathering more support for what you already think, balance it by seeking out information that questions it. This takes willingness to tolerate uncertainty, but it is one of the most effective ways to resist the seduction of confirmation bias and get closer to the bigger picture.

Some questions to think about

- What do I feel most sure about in my life?

- Which opinions have I held for a long time without re-examining them?

- What stories do I tell myself about who I am, and what I can and can’t do?

- Is there anything I blindly believe without testing it?

Optional challenge

When you find yourself forming a strong opinion, try one of these:

- Argue the opposite side as if you had to defend it robustly.

- Ask someone you trust what details you might be missing.

- Look for one piece of reliable evidence that does not fit your current view.

The aim is not to change your point of view, but to stretch its boundaries.

A Buddh-ish take

Acharya Buddharakkhita’s translation of The Dhammapada starts with:

“Mind precedes all mental states. Mind is their chief; they are all mind-wrought.”

What I interpret this as is that the mind is not a neutral camera. It has a built-in filter that guides our experience by what it notices, how it interprets events, and what it likes to stick with.

Wisdom lies in loosening our grip on being right and in being more interested in seeing all points of view clearly.

Back to Mindset Mechanics <

Back to The Vault <